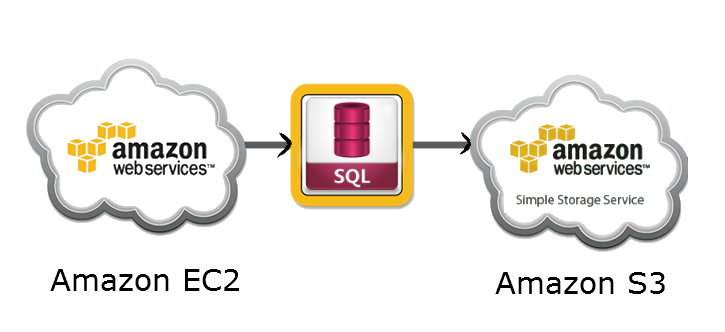

Below is the simple code to create sql script of database on Amazon EC2 server using “mysqldump”…

then upload this sql script to Amazon S3 bucket using command line S3 tool “s3cmd”…

<?php

$sqlbackup="/usr/bin/mysqldump -v -u root -h localhost -r /var/www/html/backup/".date("Y-m-d-H-i-s")."_db.sql -pdbusername databasename 2>&1";

exec($sqlbackup, $o);

echo implode("<br /> ", $o);

$file = "/var/www/html/backup/".date("Y-m-d-H-i-s")."_db.sql";

$bucket = "s3bucketname";

exec("/usr/bin/s3cmd put –acl-public –guess-mime-type –config=/var/www/html/.s3cfg ".$file." s3://".$bucket." 2>&1", $o);

echo implode("<br /> ", $o);

?>

0 */12 * * * env php -q /var/www/html/s3bkup/s3bkup.php > /dev/null 2>&1 (per 12 hours)

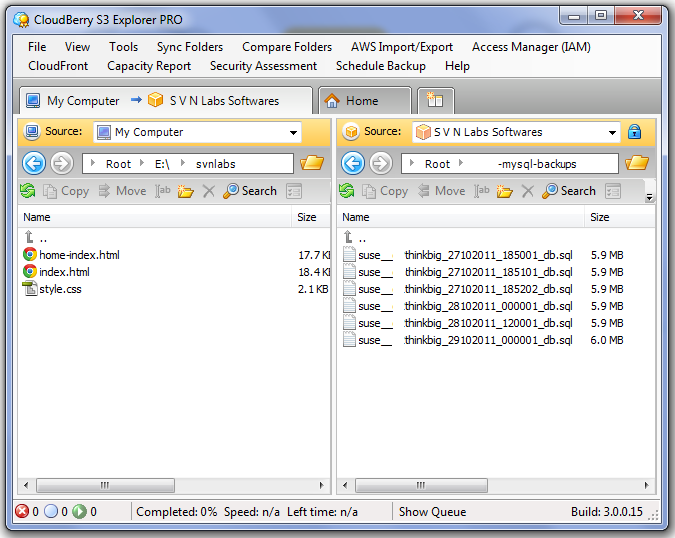

You can set above php file as scheduled task (cronjob) for automated backup on Amazon S3 Bucket, I mostly use CloudBerry Explorer for Amazon S3 PRO for managing Amazon S3 files 😉